OpenNebula Systems joined thousands of AI leaders, engineers, and innovators at NVIDIA GTC 2026 in San Jose, USA, where one thing was clear: AI is no longer experimental. It’s operational. And infrastructure is now the main event.

At our booth, we connected with a wide range of stakeholders, from neocloud providers and telcos to HPC centers actively building AI factories, turning high-level conversations into concrete architectural discussions. Across the board, the challenge was consistent: how to move from raw GPU infrastructure to production-ready, sovereign AI cloud services.

Showcasing OpenNebula on the Global Stage

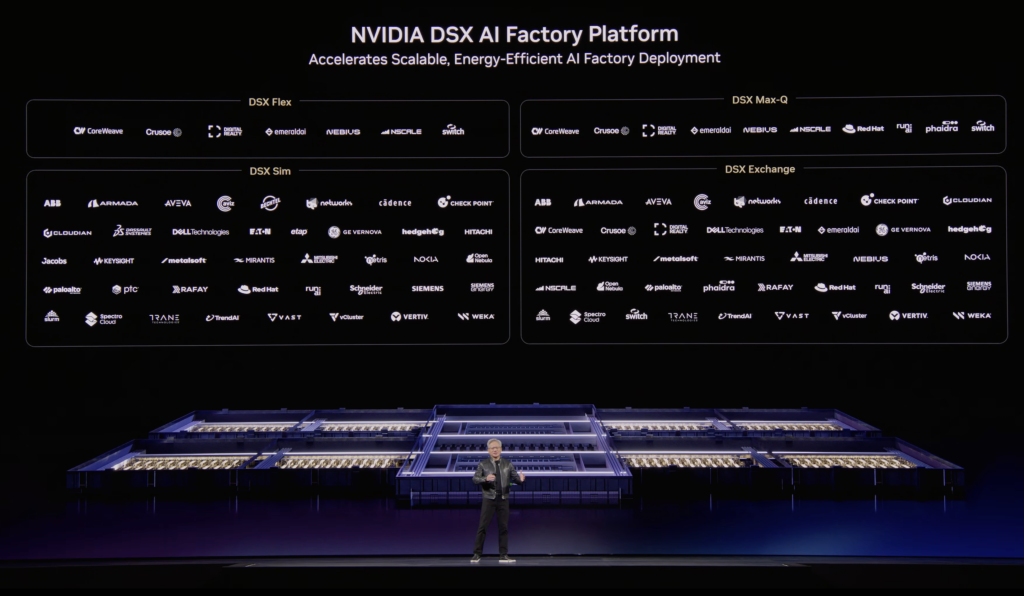

GTC 2026 marked an important moment of visibility for OpenNebula. During Jensen Huang’s keynote, OpenNebula was featured as part of the NVIDIA DSX ecosystems: frameworks designed to enable secure integration, simulation, and validation of AI factory environments.

Being showcased in this context reinforces OpenNebula’s role as a key enabler in the emerging AI factory paradigm, where infrastructure must seamlessly connect physical resources, digital twins, and operational workflows.

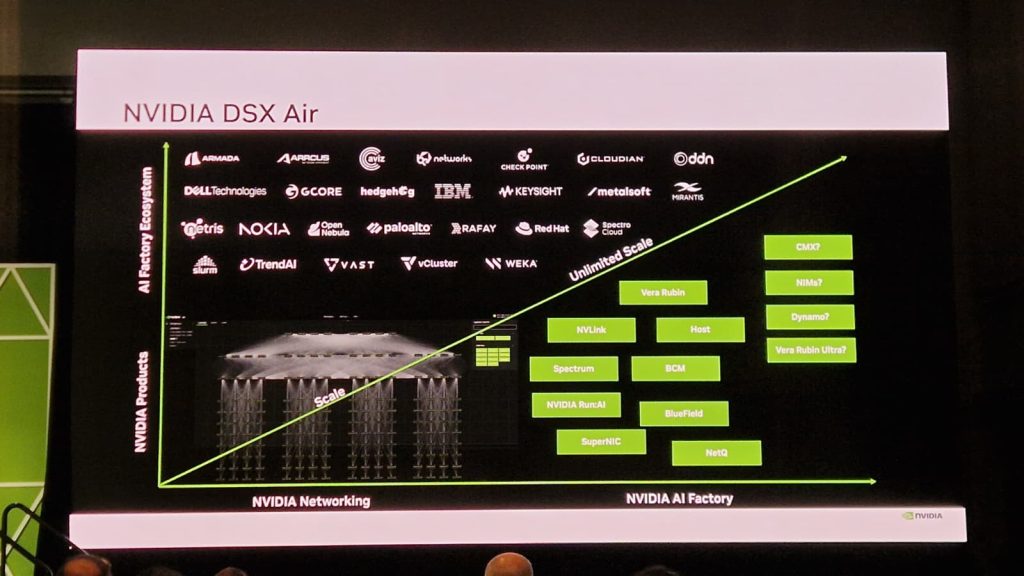

OpenNebula was also highlighted during an NVIDIA Air session, where its capabilities were showcased in the context of AI infrastructure deployment and simulation workflows. This visibility further reinforces the role of OpenNebula within NVIDIA’s ecosystem, particularly in enabling reproducible, testable environments for AI factory architectures.

From Bare Metal to AI Factories: A Unified Path

A major highlight of the event was the announcement of the integration between OpenNebula and the NVIDIA NCX Infra Controller, enabling a unified path from bare-metal GPU servers to fully instantiated AI factory environments.

This integration significantly streamlines the deployment lifecycle—bridging the gap between infrastructure provisioning and AI service delivery. Organizations can now move faster from hardware to operational AI platforms, while maintaining control, sovereignty, and efficiency across the stack.

In a landscape where speed and scalability define competitiveness, this represents a critical step toward operationalizing AI at scale.

Ready to bridge the gap between GPU infrastructure and production AI services? Explore how OpenNebula and NVIDIA NCX Infra Controller enable a unified, automated path to building scalable, sovereign AI factories here.

Virtualizing NVIDIA GB200 NVL4

At the booth, we showed a live demo around NVIDIA GB200 NVL4, which is a very different class of system compared to what most people are used to. With Grace CPUs, Blackwell GPUs, and high-bandwidth interconnects, these platforms are clearly designed for large-scale AI training and inference from the ground up.

The real question for cloud and AI Factory operators is not the hardware itself — it’s how to operate it. More specifically, how do you virtualize something like this without losing performance?

What we demonstrated is that this is possible using PCI passthrough. OpenNebula provides virtual machines with direct access to the GPUs, so you keep native performance while still getting the benefits of virtualization, like isolation and lifecycle management.

We also covered how this fits into a broader setup. This includes GPU access through passthrough, support for MIG when you need to split resources across tenants, and integration with automated provisioning of the underlying infrastructure. The idea is not just to run a single workload fast, but to make these systems usable in a multi-tenant environment without turning them into static clusters.

If you’re looking at operating GB200-class systems in a real environment, you can take a closer look at how OpenNebula handles this here.

Securing North–South Traffic with BlueField DPUs

Another topic we focused on during the demos was networking.

As these environments scale — especially in AI, telco, and edge use cases — networking becomes a bottleneck very quickly. Not just in terms of performance, but also isolation. Once you start running multiple tenants, you need to guarantee separation, predictable behavior, and low latency, all at the same time.

What we showed is how BlueField DPUs help move part of that complexity out of the host. By offloading networking and security functions to the DPU, you reduce CPU overhead and get a more controlled and isolated environment.

From the OpenNebula side, this integration fits naturally into the overall architecture. It allows you to keep a consistent operational model while adding hardware acceleration for networking and security, instead of layering more software on top.

If you want to see how this works in practice, you can explore the integration between OpenNebula and NVIDIA BlueField DPUs here.

Continuing to Expand the NVIDIA Ecosystem

The momentum from GTC 2026 reflects a broader strategic direction: expanding OpenNebula’s integration with NVIDIA technologies to deliver fully operational AI factories.

As organizations continue to scale their AI initiatives, the focus will remain on simplifying infrastructure complexity while enabling sovereignty, efficiency, and performance at scale. OpenNebula will continue to evolve alongside the NVIDIA ecosystem, bringing together compute, networking, and orchestration into a unified platform for next-generation AI workloads.

We look forward to continuing these collaborations and supporting our users in building and operating AI infrastructure that is not only powerful, but truly production-ready.

0 Comments