We’re excited to release our latest white paper: Telco AI Factories: An OpenNebula Reference Architecture. Building upon our previous AI Factory Reference Architecture, this new document is tailored specifically to the needs of the telco edge, an area transitioning from a connectivity-centric paradigm to a new model defined by distributed AI intelligence.

The Strategic Advantage of the Telco AI Factory

While hyperscalers dominate centralized AI model training, telcos own the highly-distributed edge infrastructure necessary for the next phase of AI: distributed inference. Running AI workloads close to the data source unlocks several critical advantages:

- Latency-Critical Inference: Processing data within milliseconds for safety-critical and industrial applications.

- Reduced Backhaul Costs: Keeping large datasets at the edge rather than transporting them to central clouds.

- Enhanced Sovereignty: Ensuring sensitive public sector or enterprise data never leaves the local jurisdiction.

To make edge nodes economically viable, they must be multi-purpose. An edge node must run 5G vRAN software to support mobile subscribers while simultaneously utilizing GPU resources for enterprise AI inference or internal network optimization (AI-RAN).

The technical hurdle is isolating these environments so that demanding AI workloads don’t degrade the strict performance requirements of the mobile network. Our white paper outlines exactly how to solve this using OpenNebula’s Enhanced Platform Awareness (EPA), guaranteeing near-native hardware performance through CPU Pinning, NUMA awareness, and PCI Passthrough/SR-IOV.

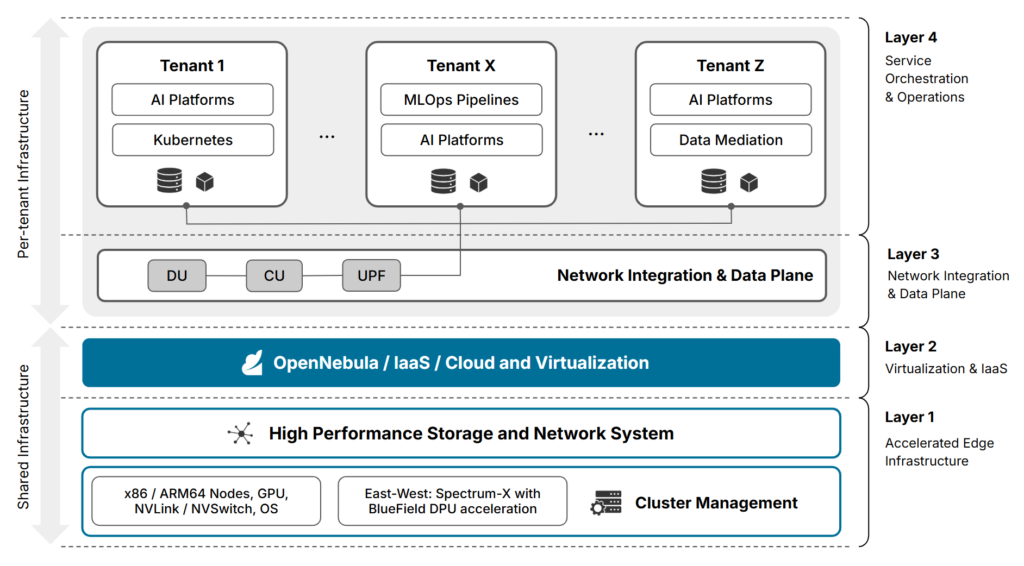

Inside the 4-Layer Reference Architecture

The optimal architecture for a Telco AI Factory is a converged, layered system that unifies high-performance computing with cloud-native agility.

- Layer 1: Accelerated Edge Infrastructure. The physical hardware base, including x86/ARM64 nodes, unified memory architectures, and NVIDIA Spectrum-X/BlueField DPUs for hardware offloading and hard security boundaries.

- Layer 2: Virtualization & IaaS. OpenNebula acts as the centralized control plane, abstracting physical resources and providing near-zero virtualization overhead to ensure workloads run with bare-metal efficiency.

- Layer 3: Network Integration & Data Plane. The critical link between the 5G core and AI. This layer handles UPF traffic steering and Local Breakout (LBO), feeding raw data directly into AI inference engines with sub-millisecond latency.

- Layer 4: Service Orchestration & Operations. The automation layer that handles complex multi-component AI Platforms, together with complementary data mediation services and Day 2 MLOps pipelines.

By moving beyond simple connectivity toward a converged, sovereign infrastructure, operators can secure a pivotal role in the AI value chain. This white paper provides the technical blueprint for this transition, showcasing how OpenNebula empowers operators to build edge AI environments that unite cloud-native flexibility with carrier-grade reliability and strict data sovereignty.

0 Comments