Across Europe, supercomputing centers are going through a quiet but significant shift. For years, the model was straightforward. They had large computer systems, batch schedulers, and researchers submitting jobs. It worked, and it still does for a lot of workloads. But the EuroHPC AI Factories initiative makes it clear that this model is evolving. HPC centers are no longer just places where computing happens. They’re becoming AI services platforms. Not just for researchers, but for startups, industry, and public sector teams building real AI systems.

And once you look at it that way, it’s clear this isn’t just a EuroHPC thing. The same shift is happening elsewhere, just in different ways. In the US, programs around the DOE labs and systems like Frontier and Aurora are already blending HPC, AI, and data more closely. Canada is moving in a similar direction through the Digital Research Alliance of Canada. The UK is investing in AI-focused systems like Isambard-AI while staying connected to EuroHPC. And Japan, with Fugaku and its planned successors, is pushing toward tightly integrated HPC and AI platforms. Different paths, same trend: HPC is becoming more flexible, more service-oriented, and much closer to how AI is actually built and used today.

From Supercomputers to Hybrid HPC-Cloud Platforms

This transition is not simply about adding more GPUs. It requires rethinking how infrastructure is operated and delivered. AI Factories must make powerful computing systems available as flexible services while preserving the performance, security, and governance standards that HPC environments require.

In practice, this means combining the reliability of supercomputing with the accessibility of modern cloud platforms. That tension, between control and flexibility, is now at the core of HPC evolution. Traditional HPC environments were designed for scheduled, batch-oriented workloads. That model still works. But it doesn’t match how AI is built today.

AI teams from SMEs, research, and industry expect something different. They want immediate access to GPU resources, environments they can spin up without friction, and infrastructure that behaves more like software than hardware. That’s where many HPC platforms start to show their limits.

EuroHPC as a First Large-Scale Signal

EuroHPC AI Factories make this shift visible. They bring together very different types of users and workloads on top of the same infrastructure, while still operating under the constraints that define HPC environments.

What makes them interesting is not just their scale, but what they represent. They are an early example of a broader transformation: HPC is becoming more dynamic, more service-oriented, and much closer to cloud in how it is consumed, without being able to rely on cloud-like abstractions. That’s not an easy balance to achieve.

A Unified Operational Layer

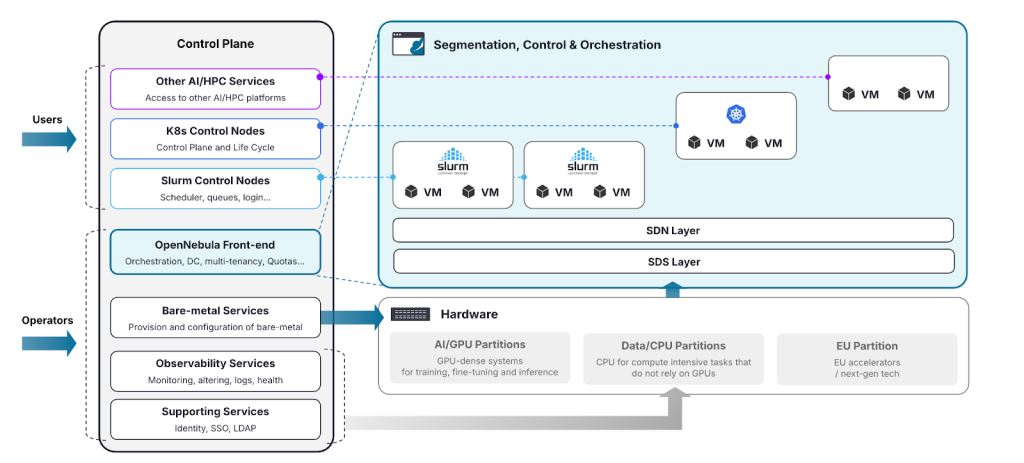

To support this new model, HPC centers need an infrastructure management layer that can bridge these worlds without fragmenting infrastructure or increasing operational complexity.

That’s essentially what OpenNebula does. It gives you on-demand, multi-tenant environments where tenants, acting as service admins, can run Slurm clusters, Kubernetes clusters, or virtual machines, all in one place. So instead of ending up with separate silos for each type of workload, everything just runs side by side on the same infrastructure. And for operators, this shift actually simplifies things. Everything can be controlled through a single layer with consistent policies and isolation mechanisms.

Just as importantly, it fits into existing environments instead of trying to replace them. And the nice part is that this extra flexibility doesn’t get in the way of users. From their perspective, not much changes. They can still work the way they’re used to, through Slurm, Kubernetes, or whatever interface they rely on, including AI services like LLMs. So you’re not replacing your environment. You’re making it coherent.

From Infrastructure to Services

One of the biggest changes introduced by AI Factories is not technical, but operational. It’s no longer enough to provide access to infrastructure. Users expect services. What used to be a collection of resources becomes a platform that delivers capabilities.

OpenNebula enables HPC centers to expose their infrastructure in this way. GPU resources can be consumed on demand, development environments can be deployed instantly, and AI workloads can move between different execution models without friction. This makes it possible to deliver GPU-as-a-Service, LLM-as-a-Service, and AI-as-a-Service across virtual machines, Kubernetes environments, and traditional HPC stacks, all with consistent isolation and governance.

Supporting the Full Spectrum of AI Workloads

AI workloads are not uniform. Some require tightly coupled GPU clusters for training. Others are interactive and iterative. Many are long-running services built around inference. Trying to support all of this with fragmented tooling quickly becomes unmanageable.

OpenNebula allows these different patterns to coexist within the same infrastructure. Resources can be allocated directly when performance matters most, or abstracted and shared when flexibility is needed. GPUs, in particular, can be partitioned and shared across applications and users, enabling higher utilization without compromising isolation.

Lowering Entry Barriers

EuroHPC AI Factories are also about creating environments where innovation can happen more easily. That means lowering the barrier to entry. Making it easier to deploy models. Reducing the time between getting access to infrastructure and actually doing useful work.

OpenNebula supports this by providing application marketplaces and model catalogues that allow operators to offer curated environments and reusable building blocks. Instead of starting from scratch, users can work with preconfigured platforms, shared models, and standardized services. This turns infrastructure into something people can actually build on, not just consume.

Enabling AI Ecosystems

A private application marketplace and model catalogue make it possible to create a local and open ecosystem where companies, researchers, and developers can contribute models, tools, and services that others can easily reuse. Over time, this can create a network effect, where the value of the platform grows with every new contribution.

OpenNebula’s marketplace can host emerging European AI applications and services, such as FedEU, Lithops, or Flower, alongside curated catalogues of AI models. These include European foundation models developed at EU level, like EuroLLM or Mistral, as well as national initiatives such as ALIA, Salamandra, or GPT-NL.

At the same time, this local ecosystem is not isolated. It connects naturally with the broader open-source AI landscape. OpenNebula supports widely used frameworks such as vLLM, integrates with model repositories like Hugging Face, and also enables the use of NVIDIA AI applications and the NIM catalogue. This provides access to widely adopted models and optimized AI services, combining open innovation with enterprise-grade performance.

Federation and Hybrid Cloud Without Complexity

Another important aspect of AI Factories is the need to connect infrastructures across sites. This introduces a new layer of complexity. Different organizations, different policies, different operational models, all needing to work together.

OpenNebula approaches this in a straightforward way. It enables multi-site deployments where each location retains control, while still being part of a larger system. Workloads, data, and services can move across sites without forcing everything into a single centralized model. It’s a practical way to enable collaboration without losing independence.

At a higher level, this can be extended through integration with federation and service orchestration tools such as Waldur. This makes it possible to connect multiple providers into a single environment, enabling cross-border collaboration and a more unified user experience across the EuroHPC ecosystem.

Moreover, the architecture supports a hybrid cloud model, where on-premise infrastructure can be extended with external resources when additional capacity is needed. This is especially useful during large training runs or temporary spikes in demand.

In those cases, the platform can provision resources from external providers while keeping the same operational model. OpenNebula integrates with public cloud services like AWS as well as European providers such as Scaleway, allowing capacity to expand without changing how users interact with the system.

Openness and Vendor-neutrality as a Foundation

There’s a reason openness matters so much in this context. When infrastructure becomes a platform, the control layer becomes critical. If that layer is proprietary or tightly coupled to a specific vendor, it limits how the system can evolve.

OpenNebula is open source and designed to be vendor-neutral. That means operators can build on it, adapt it, and integrate it with the technologies they choose, without being locked into a particular ecosystem. This is less about ideology and more about long-term flexibility, especially in environments where infrastructure lifecycles span many years.

At the same time, being open source aligns naturally with the broader push for technological independence. It gives organizations full control over how their infrastructure is built and operated, while staying consistent with the sovereignty principles emerging worldwide, particularly in regions like Europe, Canada, and Japan.

Built for Heterogeneous AI Infrastructure

AI infrastructure is not standing still. New accelerators, new interconnects, new architectures keep showing up. Any platform that assumes a single hardware stack is going to struggle pretty quickly. OpenNebula is built with that in mind. It can orchestrate very different environments, from the latest GPU systems to high-performance networking and a range of storage backends, while staying open to whatever comes next.

It’s also already aligned with where the industry is going. It’s validated within the NVIDIA Cloud Partner reference architecture, including newer systems like GB200 and GB300 with NVLink and DPUs, and it integrates closely with the broader ecosystem: AMD, NetApp, VAST, and others. So you’re not locked into a single stack, you can actually combine the pieces that make sense for your setup.

At the same time, the platform stays open and modular. That makes it easier to adopt new accelerators as they appear, including emerging European technologies, without having to rethink everything from scratch. In practice, that just means one thing: you can evolve your infrastructure over time, instead of committing too early to a fixed path.

Virtualization as the Foundation for AI Infrastructure

One of the key architectural choices when building AI Factories is how to expose compute resources, especially GPUs. While OpenNebula can integrate with bare-metal provisioning systems, the recommended approach is to adopt virtualization as the primary deployment model.

This direction is also reflected in the broader industry. NVIDIA, in its NCP Software Reference Guide, highlights the shift toward virtual machine–based architectures over traditional bare-metal deployments. The reasons are quite practical. Virtualization allows multiple tenants to share the same physical resources more efficiently, improves isolation between workloads and users, simplifies lifecycle management, and significantly reduces provisioning times.

In environments where different users require different amounts of GPU capacity, this flexibility becomes critical. Instead of dedicating entire servers to a single workload, virtualization makes it possible to allocate resources more precisely and increase overall utilization without compromising performance.

At the same time, modern virtualization techniques remove the traditional trade-offs. OpenNebula enables direct access to hardware through PCI passthrough, SR-IOV networking, and CPU pinning. This allows virtual machines and containers to access GPUs, networking, and storage with performance that is effectively equivalent to bare metal. Multiple studies show it typically ranges between 0% and 3%, and in some cases can even outperform bare metal thanks to optimizations such as huge pages and NUMA-aware scheduling. Recent work by OpenNebula Systems in collaboration with NVIDIA demonstrates this approach with next-generation platforms like GB200 NVL4, where GPUs are exposed directly to virtual machines while preserving native performance.

A Platform for What Comes Next

EuroHPC AI Factories are one of the clearest signals of where HPC is heading. More dynamic. More service-driven. More aligned with how AI is developed and deployed. What works there is unlikely to stay confined to Europe or to publicly funded initiatives. It will influence how HPC infrastructure is designed and operated more broadly.

OpenNebula fits naturally into this transition, not just because it supports AI Factories today, but because it addresses the deeper shift behind them: the move from systems to platforms. This is backed by more than 15 years of production experience running large-scale infrastructures worldwide, including AI and HPC-focused infrastructure, and reinforced by its role in major industry initiatives such as IPCEI-CIS and its involvement in upcoming programs like IPCEI-AI.

And that shift is only just beginning!

0 Comments