Many GPU-focused cloud providers and data centers start with a similar operating model: dedicated GPU servers assigned to customers for days, weeks, or months. Provisioning is often manual or only partially automated. This approach is practical in the early stages and helps monetize scarce hardware.

Over time, however, this model shows its limits. GPU hardware on its own is hard to differentiate, margins are thin, and providers end up competing mainly on price and availability. Operational effort remains high, while customer loyalty stays low.

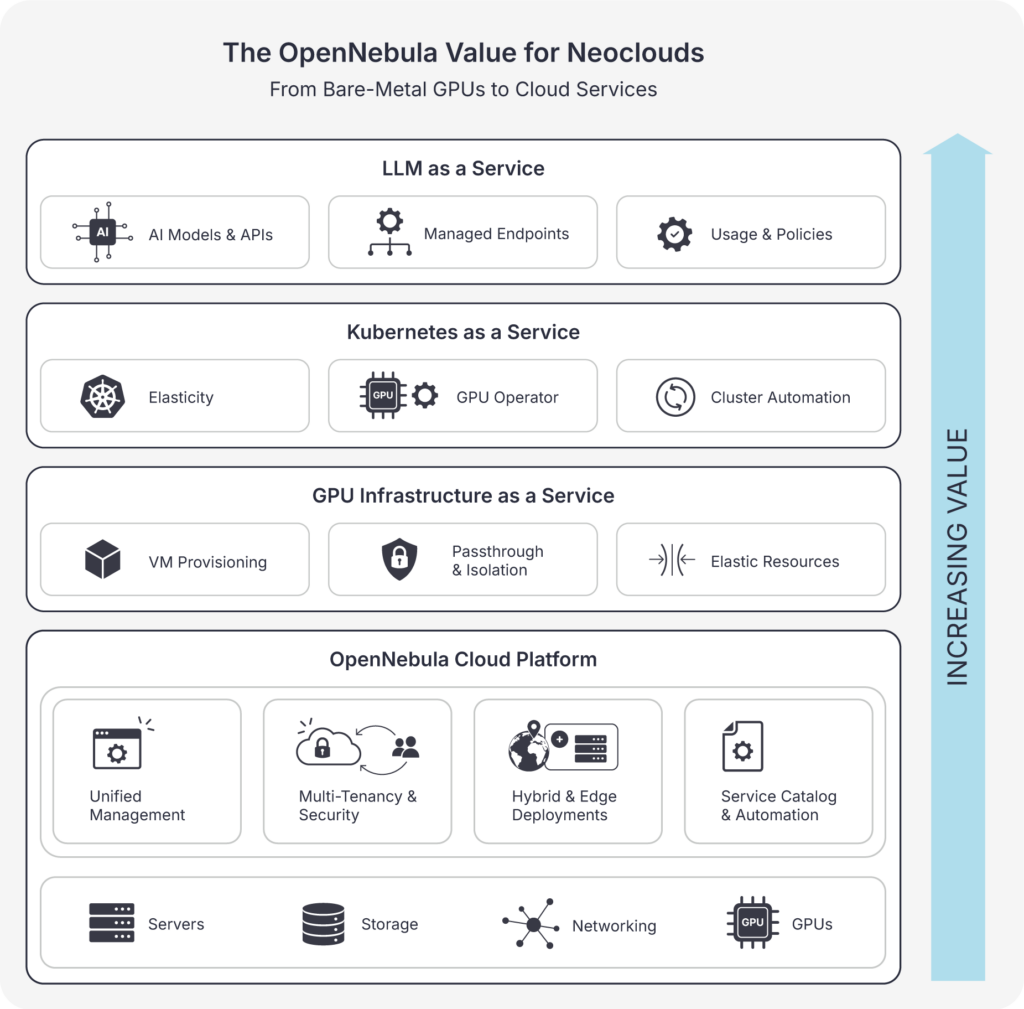

OpenNebula addresses this challenge by enabling providers to operate GPUs as a true cloud service. By introducing automation, self-service, isolation, and service catalogs on top of existing infrastructure, OpenNebula allows Neoclouds to move closer to their customers with higher-level services that deliver more value and stronger differentiation.

Three Levels of Differentiation Enabled by OpenNebula

OpenNebula provides a single cloud management platform that allows Neocloud providers to build fully automated, secure, and multi-tenant environments, supporting multiple service layers on top of the same GPU infrastructure.

1. IaaS: GPU Infrastructure as a Service

At the infrastructure layer, OpenNebula replaces static GPU allocation with a cloud-style operating model managed from a unified control plane.

- Automated provisioning of GPU-enabled virtual machines

- Support for GPU passthrough and mediated virtualization

- Secure multi-tenancy with strong isolation

- Integrated networking and storage for GPU workloads

- Elastic use of resources instead of long-lived server assignments.

This turns GPU infrastructure into a scalable, on-demand service rather than a manual hosting operation.

2. PaaS: Kubernetes as a Service

On top of infrastructure, OpenNebula enables providers to offer managed Kubernetes platforms integrated into the same cloud environment and designed for AI workloads.

- Kubernetes clusters delivered as a service

- GPU-aware scheduling, sharing, and isolation

- Integrated storage and networking for data-intensive workloads

- Automated lifecycle operations (create, scale, update, delete)

- Consistent security, access control, and governance across tenants.

At this level, providers are no longer selling servers or clusters, but ready-to-use platforms that reduce operational complexity and allow users to focus on their applications and models.

3. SaaS: LLM as a Service

At the highest layer, OpenNebula supports the delivery of fully managed LLM services using the same cloud foundation.

- Curated LLMs and AI services offered from a catalog

- Managed endpoints for inference and application integration

- Usage control, isolation, and metering across tenants

- Policy-based access and lifecycle management.

This allows Neocloud providers to offer complete, consumable LLM services, where users focus on results rather than platforms or infrastructure.

Why OpenNebula Matters

OpenNebula gives Neocloud providers a single, open platform to evolve from basic GPU hosting to higher-value differentiated cloud services:

- End-to-end automation across infrastructure and services

- Secure, sovereign, multi-tenant operation

- A clear progression from IaaS to PaaS and SaaS

- Improved margins and stronger, longer-term customer relationships.

As GPU hardware becomes more widely available, differentiation moves from the hardware itself to how services are built and delivered. OpenNebula provides the cloud foundation that enables Neocloud providers to make that shift, and sustain it.

Meet us in person! We’ll be attending — and exhibiting — at key industry events including Mobile World Congress in Barcelona, the EuroHPC Summit in Paphos, and NVIDIA GTC in San Jose. Come visit our team, see live demos, and discuss how OpenNebula can power your AI Factories and Neocloud platforms.

0 Comments