Prolog

Building and maintaining a cloud RSS reader requires resources. Lots of them! Behind the deceivingly simple user interface there is a complex backend with huge datastore that should be able to fetch millions of feeds in time, store billions of articles indefinitely and make any of them available in just milliseconds – either by searching or simply by scrolling through lists. Even calculating the unread counts for millions of users is enough of a challenge that it deserves a special module for caching and maintaining. The very basic feature that every RSS reader should have – being able to filter only unread articles, requires so much resource power that it contributes to around 30% of the storage pressure on our first-tier databases.

Until recently we were using bare-metal servers to operate our infrastructure, meaning we deployed services like database and application servers directly on the operating system of the server. We were not using virtualization except for some really small micro-services and it was practically one physical server with local storage broken down into several VMs. Last year we have reached a point where we had a 48U (rack-units) rack full of servers. More than half of those servers were databases, each with its own storage. Usually 4 to 8 spinning disks in RAID-10 mode with expensive RAID controllers equipped with cache modules and BBUs. All this was required to keep up with the needed throughput.

There is one big issue with this setup. Once a database server fills up (usually at around 3TB) we buy another one and this one becomes read-only. CPUs and memory on those servers remain heavily underutilized while the storage is full. For a long time we knew we have to do something about it, otherwise we would soon need to rent a second rack, which would have doubled our bill. The cost was not the primary concern. It just didn’t feel right to have a rack full of expensive servers that we couldn’t fully utilize because their storage was full.

Furthermore redundancy was an issue too. We had redundancy on the application servers, but for databases with this size it’s very hard to keep everything redundant and fully backed up. Two years ago we had a major incident that almost cost us an entire server with 3TB of data, holding several months worth of article data. We have completely recovered all data, but that was close.

Big changes were needed!

While the development of new features is important, we had to stop for a while and rethink our infrastructure. After some long sessions and meetings with vendors we have made a final decision:

We will completely virtualize our infrastructure and we will use OpenNebula + KVM for virtualization and StorPool for distributed storage.

Cloud Management

We have chosen this solution not only because it is practically free if you don’t need enterprise support but also because it is proven to be very effective. OpenNebula is now mature enough and has so many use cases it’s hard to ignore. It is completely open source with big community of experts and has an optional enterprise support. KVM is now used as primary hypervisor for EC2 instances in Amazon EWS. This alone speaks a lot and OpenNebula is primarily designed to work with KVM too. Our experience with OpenNebula in the past few months didn’t make us regret this decision even once.

Storage

Now a crucial part of any virtualized environment is the storage layer. You aren’t really doing anything if you are still using the local storage on your servers. The whole idea of virtualization is that your physical servers are expendable. You should be able to tolerate a server outage without any data loss or service downtime. How do you achieve that? With a separate, ultra-high performance fault-tolerant storage connected to each server via redundant 10G network.

There’s EMC‘s enterprise solution, which can cost millions and uses proprietary hardware, so it’s out of our league. Also big vendors doesn’t usually play well with small clients like us. There’s a chance that we will just have to sit and wait for a ticket resolution if something breaks, which contradicts our vision.

Then there’s RedHat’s Ceph, which comes completely free of charge, but we were a bit afraid to use it since nobody at the team had the required expertise to run it in production without any doubt that in any event of a crash we will be able to recover all our data. We were on a very tight schedule with this project, so we didn’t have any time to send someone for trainings. Performance figures were also not very clear to us and we didn’t know what to expect. So we decided not to risk with it for our main datacenter. We are now using Ceph in our backup datacenter, but more on that later.

Finally there’s a one still relatively small vendor, that just so happens to be located some 15 minutes away from us – StorPool. They were recommended to us by colleagues running similar services and we had a quick kick-start meeting with them. After the meeting it was clear to us that those guys know what they are doing at the lowest possible level.

Here’s what they do in a nutshell (quote from their website):

StorPool is a block-storage software that uses standard hardware and builds a storage system out of this hardware. It is installed on the servers and creates a shared storage pool from their local drives in these servers. Compared to traditional SANs, all-flash arrays, or other storage software StorPool is faster, more reliable and scalable.

Doesn’t sound very different from Ceph, so why did we chose them? Here are just some of the reasons:

- They offer full support for a very reasonable monthly fee, saving us the need to have a trained Ceph expert onboard.

- They promise higher performance than ceph.

- They have their own OpenNebula storage addon (yeah, Ceph does too, I know)

- They are a local company and we can always pick up the phone and resolve any issues in minutes rather than hours or days like it usually ends up with big vendors.

The migration

You can read the full story of or migration with pictures and detailed explanations in our blog.

I will try to keep it short and tidy here. Basically we managed to slim down our inventory to half of the previous rack-space. This allowed us to reduce our costs, create enough room for later expansion, which immediately and greatly increasing our compute and storage capacities. We have mostly reused our old servers in the process with some upgrades to make the whole OpenNebula cluster homogenous – same CPU model and memory across all servers, which allowed us to use “host=passthrough” to improve VM performance without the risk of VM crash during a live migration. The process took us less than 3 months with the actual migration happening in around two weeks. While we waited for the hardware to arrive we had enough time to play with OpenNebula in different scenarios, try out VM migrations, different storage drivers and overall try to break it while it’s still in test environment.

The planning phase

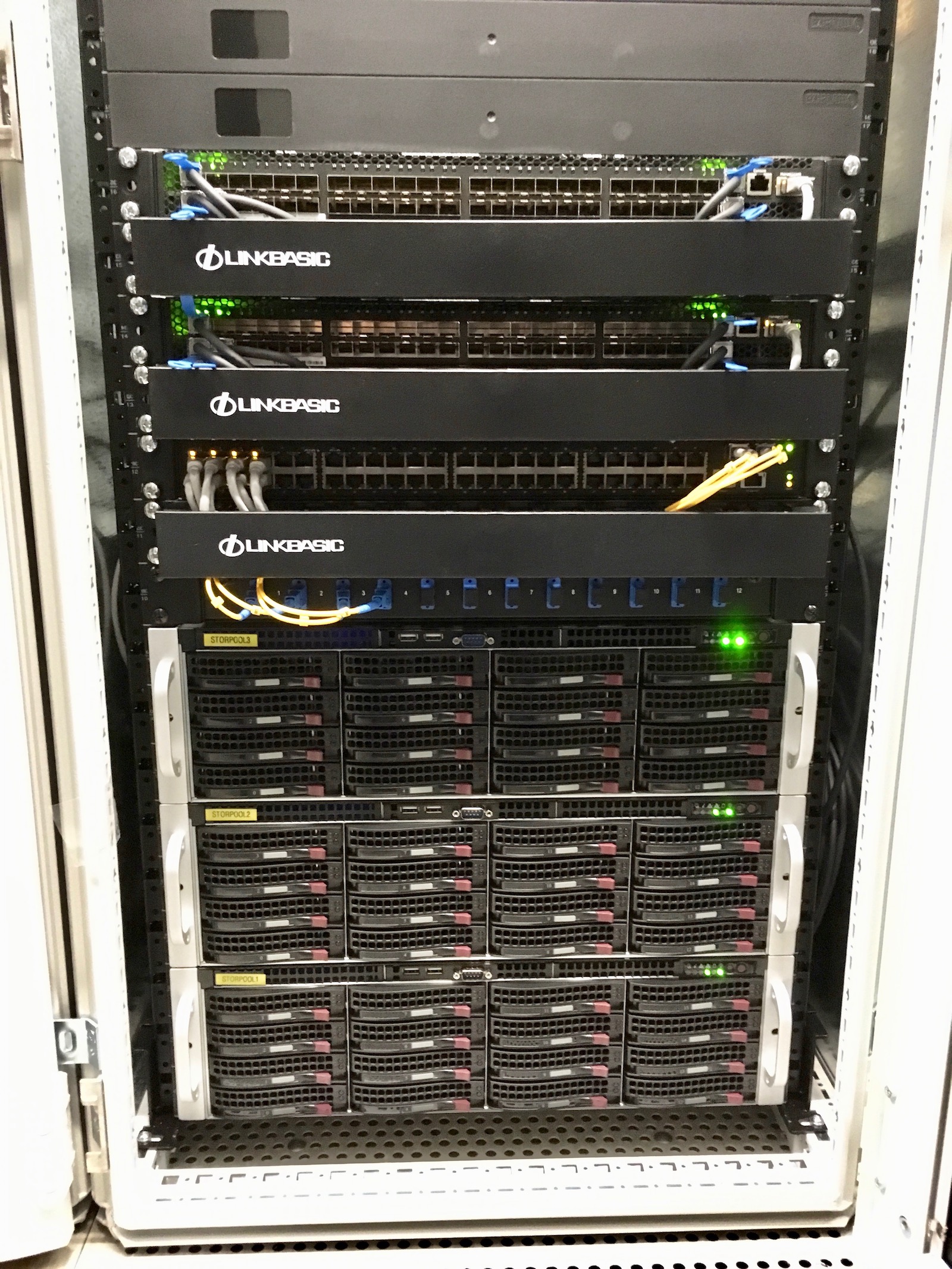

So after we made our choice for virtualization it was time to plan the project. This happened in November 2017, so not very far from now. We have rented a second rack in our datacenter. The plan was to install the StorPool nodes there and gradually move servers and convert them into hypervisors. Once we move everything we will remove the old rack.

We have ordered 3 servers for the StorPool storage. Each of those servers have room for 16 hard-disks. We have only ordered half of the needed hard-disks, because we knew that once we start virtualizing servers, we will salvage a lot of drives that won’t be needed otherwise.

We have also ordered the 10G network switches for the storage network and new Gigabit switches for the regular network to upgrade our old switches. For the storage network we chose Quanta LB8. Those beasts are equipped with 48x10G SFP+ ports, which is more than enough for a single rack. For the regular Gigabit network, we chose Quanta LB4-M. They have additional 2x10G SFP+ modules, which we used to connect the two racks via optic cable.

We also ordered a lot of other smaller stuff like 10G network cards and a lot of CPUs and DDR memory. Initially we didn’t plan to upgrade the servers before converting them to hypervisors in order to cut costs. However after some benchmarking we found that our current CPUs were not up to the task. We were using mostly dual CPU servers with Intel Xeon E5-2620 (Sandy Bridge) and they were already dragging even before the Meltdown patches. After some research we chose to upgrade all servers to E5-2650 v2 (Ivy Bridge), which is a 16-core (with Hyper-threading) CPU with a turbo frequency of 3.4 GHz. We already had two of these and benchmarks showed two-fold increase in performance compared to E5-2620.

We also decided to boost all servers to 128G of RAM. We had different configurations, but most servers were having 16-64GB and only a handful were already at 128G. So we’ve made some calculations and ordered 20+ CPUs and 500+GB of memory.

After we placed all orders we had about a month before everything arrive, so we used that time to prepare what we can without additional hardware.

The preparation phase

The execution phase

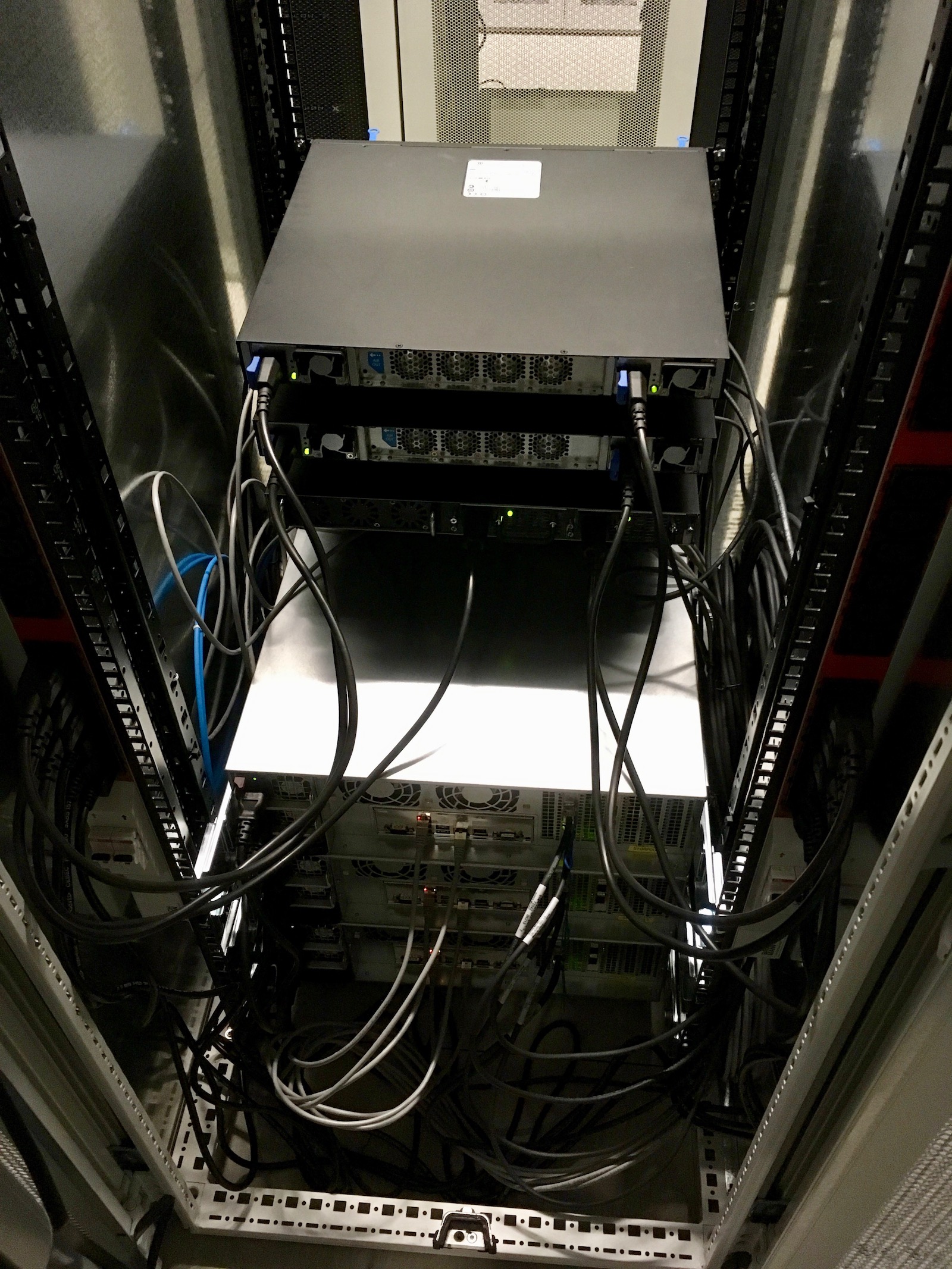

After we had our shiny new OpenNebula cluster with StorPool storage fully working it was time to migrate the virtual machines that were still running on local storage. The guys from StorPool helped us a lot here by providing us with a migration strategy that we had to execute for each VM. If there is interest we can post the whole process in a separate post.

From here on we were gradually migrating physical servers to virtual machines. The strategy was different for each server, some of them were databases, others application and web servers. We’ve managed to migrated all of them with several seconds to no downtime at all. At first we didn’t have much space for virtual machines, since we had only two hypervisors, but at each iteration we were able to convert more and more servers at once.

After that each server went through a complete change. CPUs were upgraded to 2x E5-2650 v2 and memory was bumped to 128GB. The expensive RAID controllers were removed from the expansion slots and in their place we installed 10G network cards. Large (>2TB) hard drives were removed and smaller drives were installed just for the OS. After the servers were re-equipped, they were installed in the new rack and connected to the OpenNebula cluster. The guys from StorPool configured each server to have a connection to the storage and verified that it is ready for production use. The first 24 leftover 2TB hard drives were immediately put to work into our StorPool.

The result

In just couple of weeks of hard work we have managed to migrate everything!

In the new rack we have a total of 120TB of raw storage, 1.5TB of RAM and 400 CPU cores. Each server is connected to the network with 2x10G network interfaces.

That’s roughly 4 times the capacity and 10 times the network performance of our old setup with only half the physical servers!

The flexibility of OpenNebula and StorPool allows us to use the hardware very efficiently. We can spin up virtual machines in seconds with any combination of CPU, memory, storage and network interfaces and later we can change any of those parameters just as easy. It’s the DevOps heaven!

This setup will be enough for our needs for a long time and we have more than enough room for expansion if need arise.

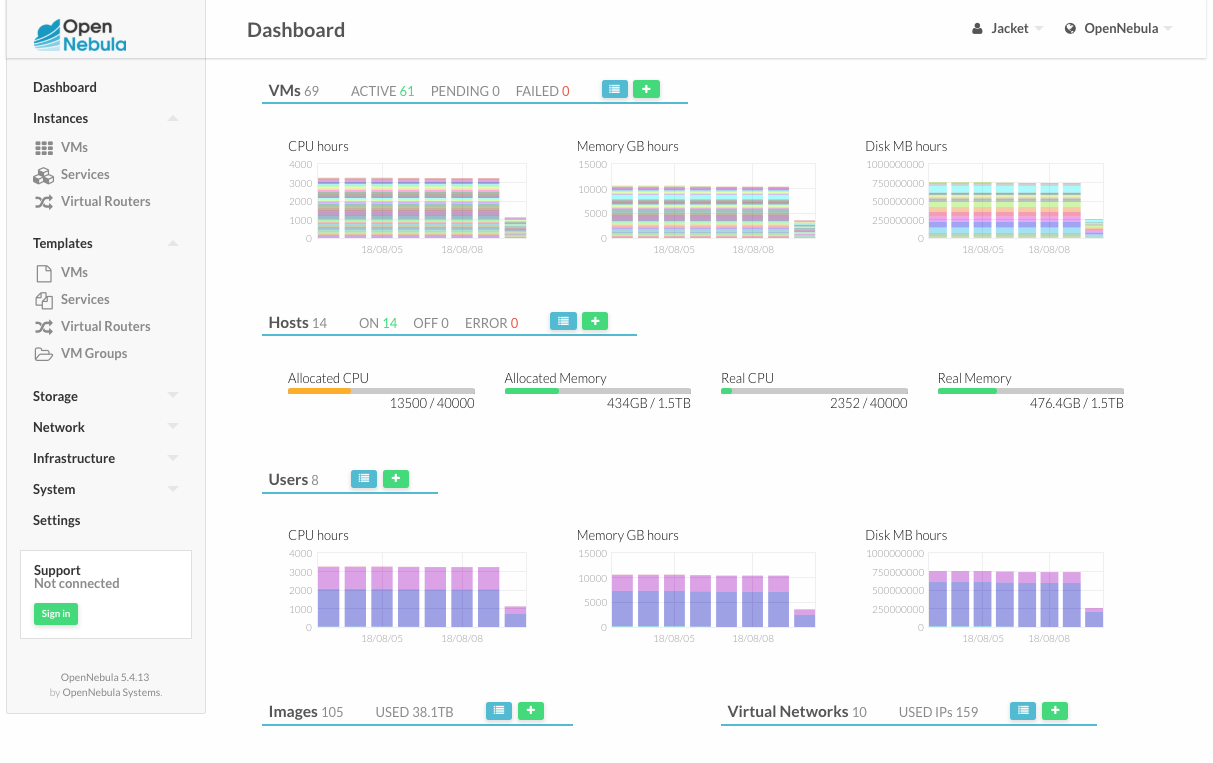

Our OpenNebula cluster

We now have more than 60 virtual machines because we have split some physical servers into several smaller VMs with load balancers for better load distribution and we have allocated more than 38TB of storage.

We have 14 hypervisors with plenty of resources available on each of them. All of them are using the same model CPU, which gives us the ability to use the “host=passthrough” setting of QEMU to improve VM performance without the risk of VM crash during a live migration.

We are very happy with this setup. Whenever we need to start a new server, it only takes minutes to spin up a new VM instance with whatever CPU and memory configuration we need. If a server crashes, all VMs will automatically migrate to another server. OpenNebula makes it really easy to start new VMs, change their configurations, manage their lifecycle and even completely manage your networks and IP address pools. It just works!

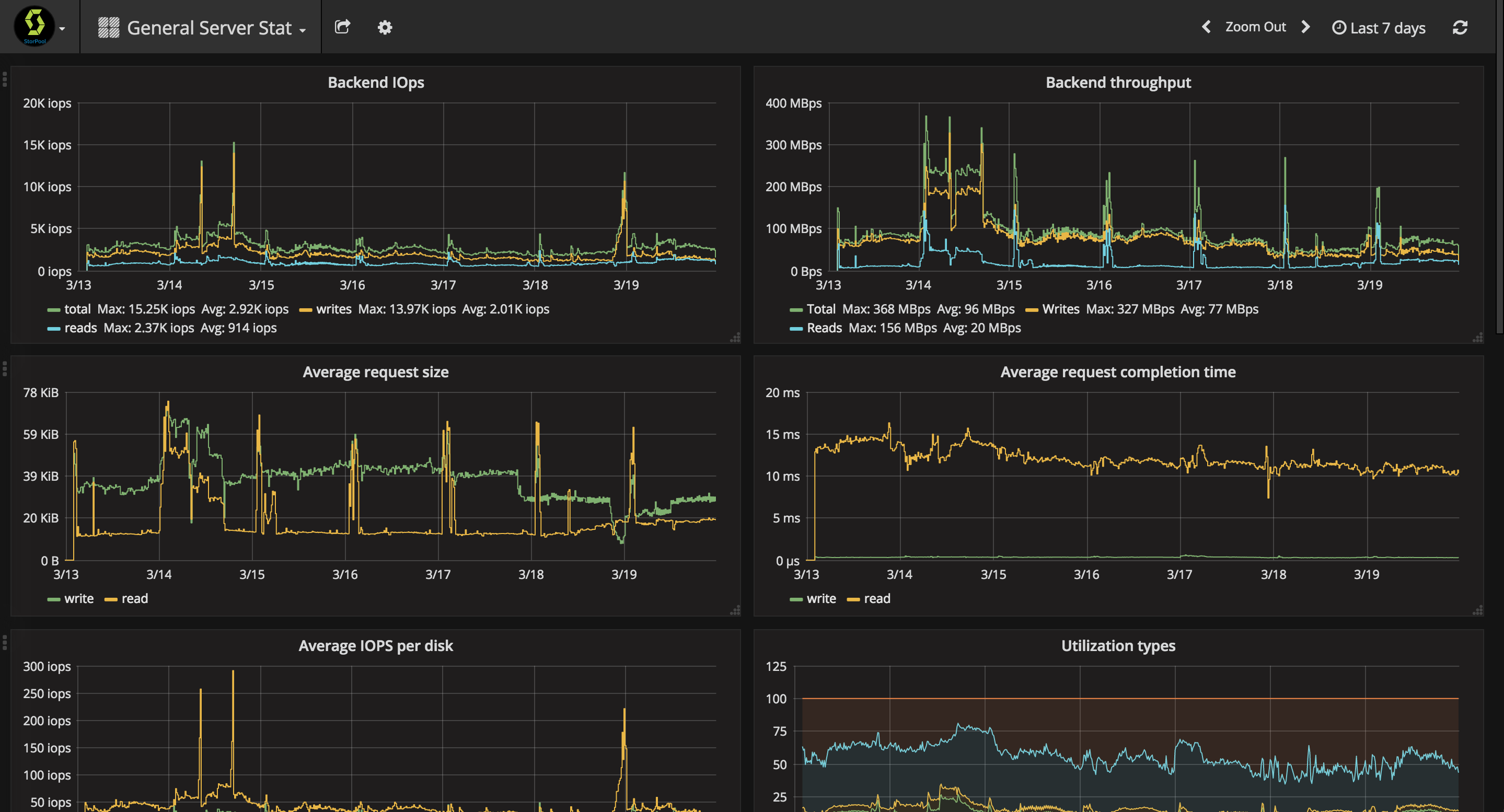

StorPool on the other hand takes care that we have all the needed IOPS at our disposal whenever we need them.

Goodies

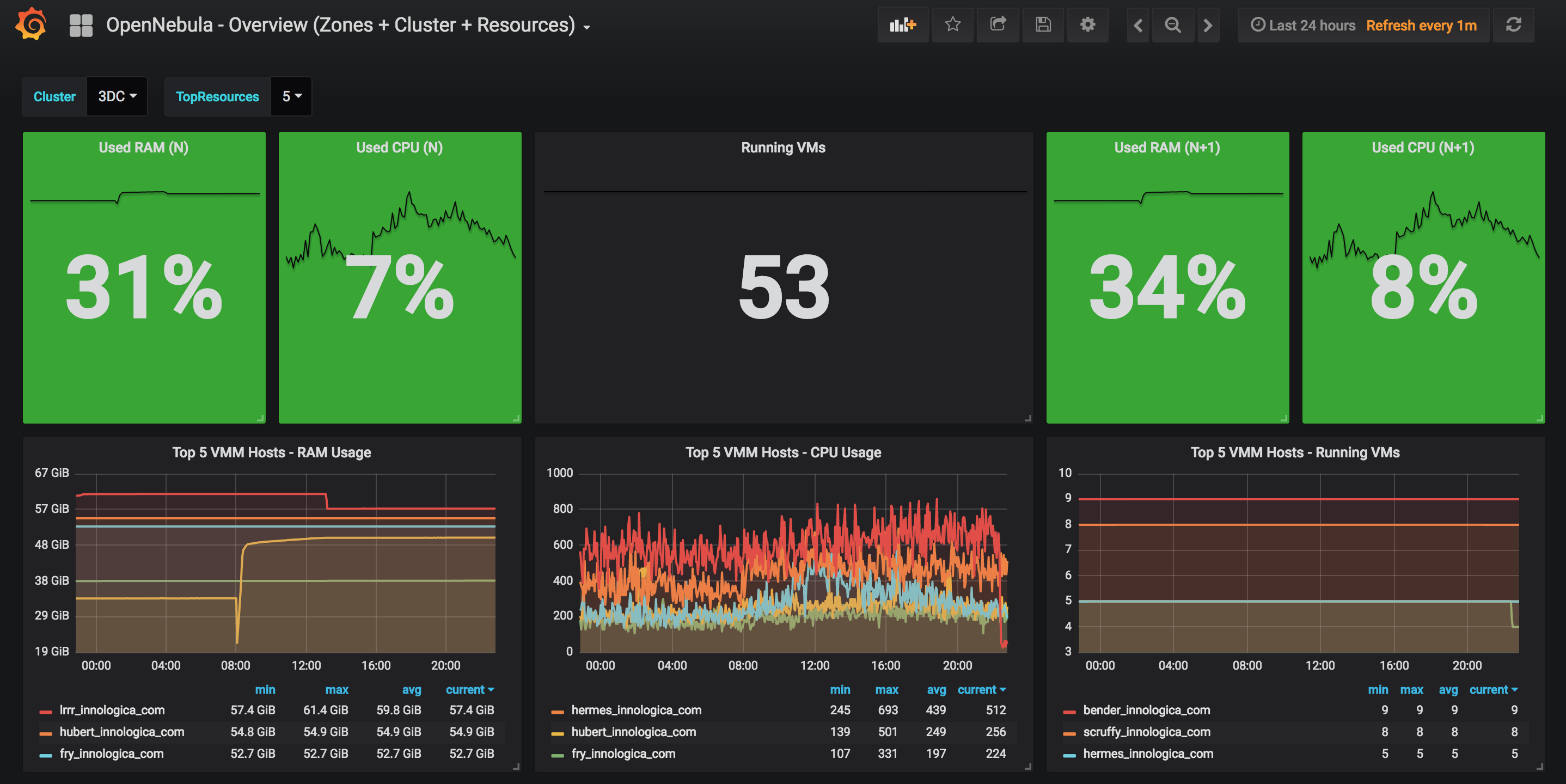

We are using Graphite + Grafana to plot some really nice graphs for our cluster.

We have borrowed the solution from here. That’s what’s so great about open software!

Our team is constantly informed for the health and utilization of our cluster. A glance at our wall-mounted TV screen is enough to tell that everything is alright. We can see both our main and backup data centers, both running OpenNebula. It’s usually all green 🙂

StorPool is also using Grafana for their performance monitoring and they have also provided us with access to it, so we can get insights about what the storage is doing at the moment, which VMs are the biggest consumers, etc. This way we can always know when a VM has gone rogue and is stealing our precious IOPS.

0 Comments