A new OpenNebula appliance, along with new innovative Edge features, will make deploying your AWS IoT Greengrass Edge environment Simple and Pain-free

AWS IoT Greengrass is a service which extends the Amazon Web Services to nodes “on the edge”, where data is gathered as near as possible to their source, processed, and sent to the main cloud. It’s a framework which provides an easy and secure way of communication among edge devices and clouds; it’s a means to run custom AWS Lambda functions on the core devices, to direct AWS services access, or to work offline without Internet connection and synchronize data later, after a connection is available.

While AWS gives a software stack the ability to connect the edge nodes into the AWS IoT Greengrass, they don’t offer the edge computing resources. It’s expected that the SDK and services are installed on the on-premises cloud within a company DC or any preferred edge computing provider.

The OpenNebula team is developing an easy-to-use service appliance for the OpenNebula Marketplace with pre-installed SDK and services for the AWS IoT Greengrass and on-boot automation. This appliance will allow you to easily run Greengrass end-nodes as virtual machines running in your on-premises cloud managed by the OpenNebula. The appliance can act as a Greengrass core, device, or both. Automation inside the appliance always prepares the instance for the specific use on the very first boot. Preparation can be semi-manual when pre-generated certificates are installed in the right places, or fully automatic by passing only the AWS credentials and leave all the registration complexities at the AWS on the appliance itself.

While you can run Greengrass end-devices on your own cloud, you still need the AWS account and subscription to the AWS IoT Greengrass central cloud service to orchestrate your IoT devices. Learn more on Getting Started with AWS IoT Greengrass.

This is an appliance that will provide AWS IoT Greengrass integration that will allow you to easily extend your OpenNebula cloud to the edge. It doesn’t get any easier!

A Real World Example

We are going to showcase the OpenNebula 5.8 with its new Distributed Data Centers (DDC) feature to automatically deploy an on-demand edge cloud integrated with the AWS IoT Greengrass. From nothing to a ready-to-use geographically-distributed infrastructure with Greengrass nodes as close as possible to their customers, within tens of minutes and automated.

As a cover story for this demo, we have chosen a model company providing monitoring as a service. The company needs a large distributed infrastructure to be able to provide performance metrics for their customers’ websites from different locations from around the world. The goal of this service is to alert the customer when his website is down (completely or just from particular location), if the website is too slow, or if the SSL certificates are near their expiration.

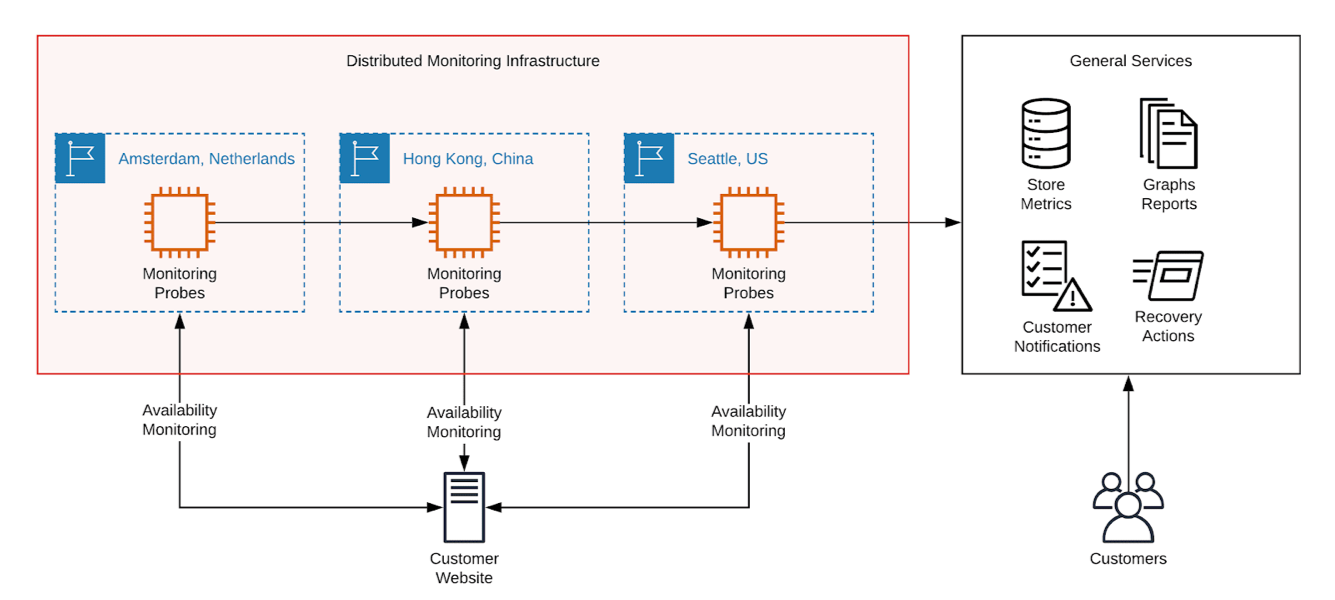

A simplified high-level schema of our model monitoring service:

The schema is divided into 2 parts. General services, which store the measured metrics, generate graphs and dashboards, alert the customers (e-mail, SMS), or trigger custom recovery actions. And, the core distributed monitoring infrastructure (red box) with probes responsible for measuring how each customers’ sites perform. While the general services can run in any single datacenter, the monitoring probes must run from as many different parts of the world as possible.

The demo focuses only on core distributed monitoring infrastructure from the red box.

To implement the distributed monitoring infrastructure, we deploy on our custom edge nodes the AWS IoT Greengrass core services. These provide us with an environment to control and run the monitoring probes, and takes care of the transport of measurements to the high lever processing services. We use Packet Bare Metal Hosting as the Edge Cloud provider for this demo, but any suitable resources (on-premises or public cloud) can be used.

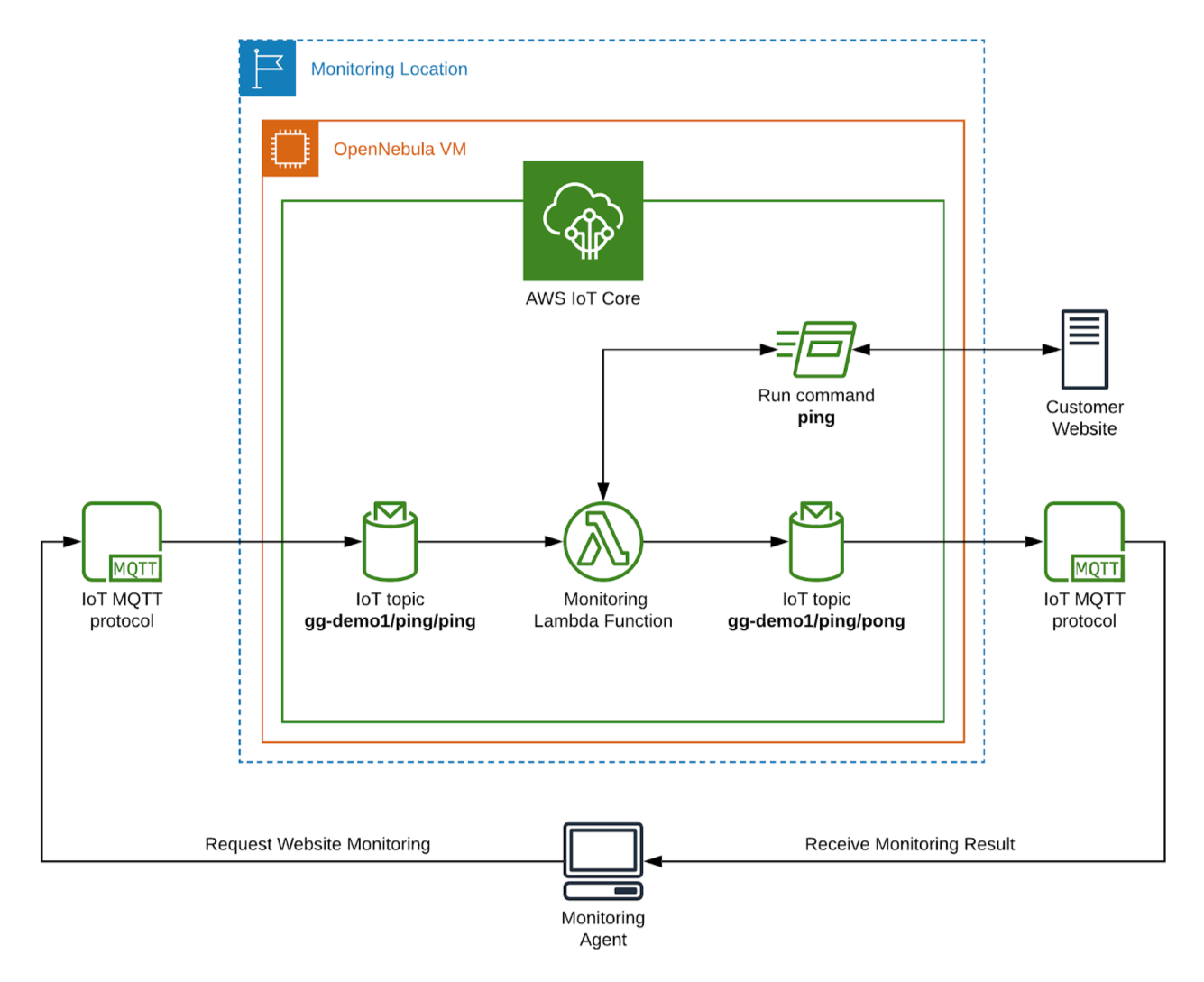

A low-level schema of single monitoring location:

Each monitoring location is implemented as an OpenNebula KVM virtual machine running the AWS IoT Greengrass core with a custom monitoring AWS Lambda function. The Lambda function is waiting for the MQTT message requesting the particular site to monitor. When the request is received, the function triggers simple latency of the customer’s site monitoring via ping command and sends back message with result.

The Demo Monitoring Agent is implemented as a simple console application which periodically broadcasts a MQTT message to monitoring Lambda functions with a request to monitor a particular host, and shows all the received measures from active locations on the terminal. Moreover, it computes minimum, maximum and average from the measured values. See the demo Monitoring Agent run in the video, or screenshot in the section with results.

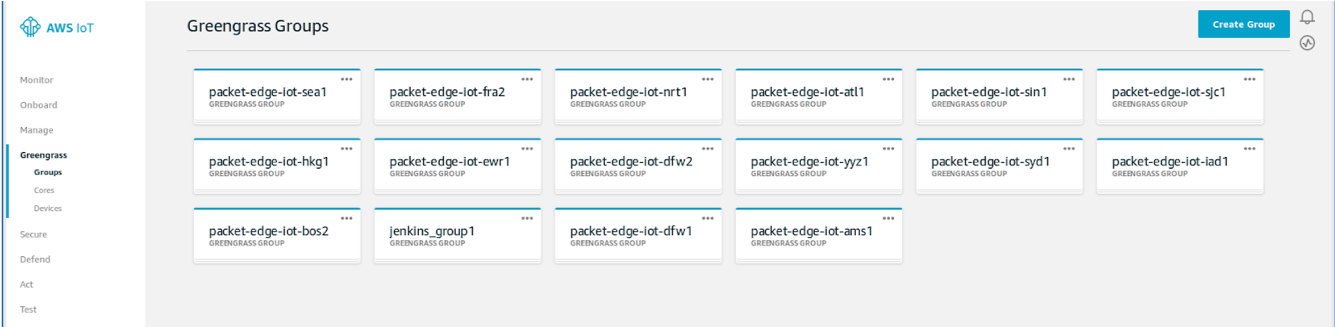

During the deployment, OpenNebula AWS IoT Greengrass appliances are provided with AWS credentials and the automation inside creates the required entities on the AWS side. For each monitoring location, a dedicated Greengrass group is created:

with a monitoring Lambda function and appropriate message subscriptions.

A Distributed Edge Cloud – fully deployed and configured

The IoT end-nodes infrastructure was created ad-hoc on the bare metal resources kindly provided by the Packet Bare Metal Hosting. OpenNebula DDC ONEprovision tool was used to deploy the physical hosts and configure them as KVM hypervisors. On these virtualization hosts, we ran the OpenNebula AWS IoT Greengrass pre-release appliance. The virtual machine was parameterized with only with AWS credentials and automation inside managed the registration on AWS. To finalize deployment, additional AWS CLI commands configured the message subscriptions and triggered the deployment of our custom monitoring Lambda function.

We were deploying the distributed Greengrass infrastructure across 15 different Packet locations. Deployment was divided into 7 phases, and the time of each phase for each location was measured.

- Phase 1: Deployment of new server on Packet with just base operating system.

- Phase 2: Configuration of host with KVM hypervisor services.

- Phase 3: OpenNebula transfers its drivers and monitors the host.

- Phase 4: KVM virtual machine with AWS IoT Greengrass services is started.

- Phase 5: Wait until VM is booted and configured for use as AWS IoT Greengrass core.

- Phase 6: Configure IoT subscriptions and trigger Lambda function deployment.

- Phase 7: Wait time until monitoring Lambda function returns first measurements.

Following table shows timings (in seconds) of each phase for all monitoring locations:

| Location | Phase1 Deploy | Phase2 Configure | Phase3 Monitor | Phase4 Run VM | Phase5 Bootstrap | Phase6 GG setup | Phase7 Wait | TOTAL |

| AMS1 | 269 | 144 | 3 | 16 | 34 | 14 | 14 | 496 |

| ATL1 | 301 | 198 | 107 | 36 | 18 | 12 | 12 | 686 |

| BOS2 | 516 | 187 | 98 | 35 | 17 | 13 | 11 | 880 |

| DFW1 | 249 | 237 | 134 | 43 | 19 | 13 | 11 | 709 |

| DFW2 | 62 | 267 | 142 | 44 | 24 | 13 | 12 | 567 |

| EWR1 | 66 | 231 | 97 | 164 | 34 | 12 | 14 | 621 |

| FRA2 | 243 | 98 | 11 | 11 | 20 | 14 | 12 | 411 |

| HKG1 | 265 | 385 | 274 | 83 | 24 | 17 | 15 | 1066 |

| IAD1 | 248 | 189 | 93 | 34 | 17 | 12 | 12 | 607 |

| NRT1 | 290 | 472 | 287 | 250 | 39 | 16 | 12 | 1369 |

| SEA1 | 242 | 255 | 152 | 49 | 19 | 13 | 12 | 744 |

| SIN1 | 268 | 316 | 194 | 60 | 24 | 16 | 14 | 895 |

| SJC1 | 270 | 328 | 169 | 260 | 36 | 14 | 13 | 1093 |

| SYD1 | 263 | 512 | 381 | 115 | 23 | 17 | 13 | 1327 |

| YYZ1 | 242 | 198 | 99 | 34 | 19 | 12 | 10 | 617 |

Description of each Packet location.

Deployment of whole infrastructure from zero to 15 Greengrass locations with working monitoring Lambda function took only 23 minutes 1 second. Infrastructure was destroyed and resources released in only 27 seconds.

There are various circumstances that affect each deployment phase.

- For phase 1, the deployment times of physical hosts fully depends on the hosting provider, suitable hosts availability, and performance of their internal services.

- For the configuration phase 2, the latency between front-end and host, or performance of the nearest OS packages mirrors in the locality is taken into account.

- For monitoring phase 3, the time depends on the remote host network latency and throughput.

- For phase 4, start of the Greengrass VM depends on the time required to transfer the 596 MiB image to the remote host. To fully boot VM and configure as Greengrass core, the host CPU performance, or AWS API latencies may affect the times.

- Phase 6 was done directly from the front-end over AWS CLI (API) – network latencies or AWS API slowness could affect these times.

- And, phase 7 fully depends on AWS how quickly they deploy and put the monitoring Lambda function in running state inside our VM with Greengrass core services.

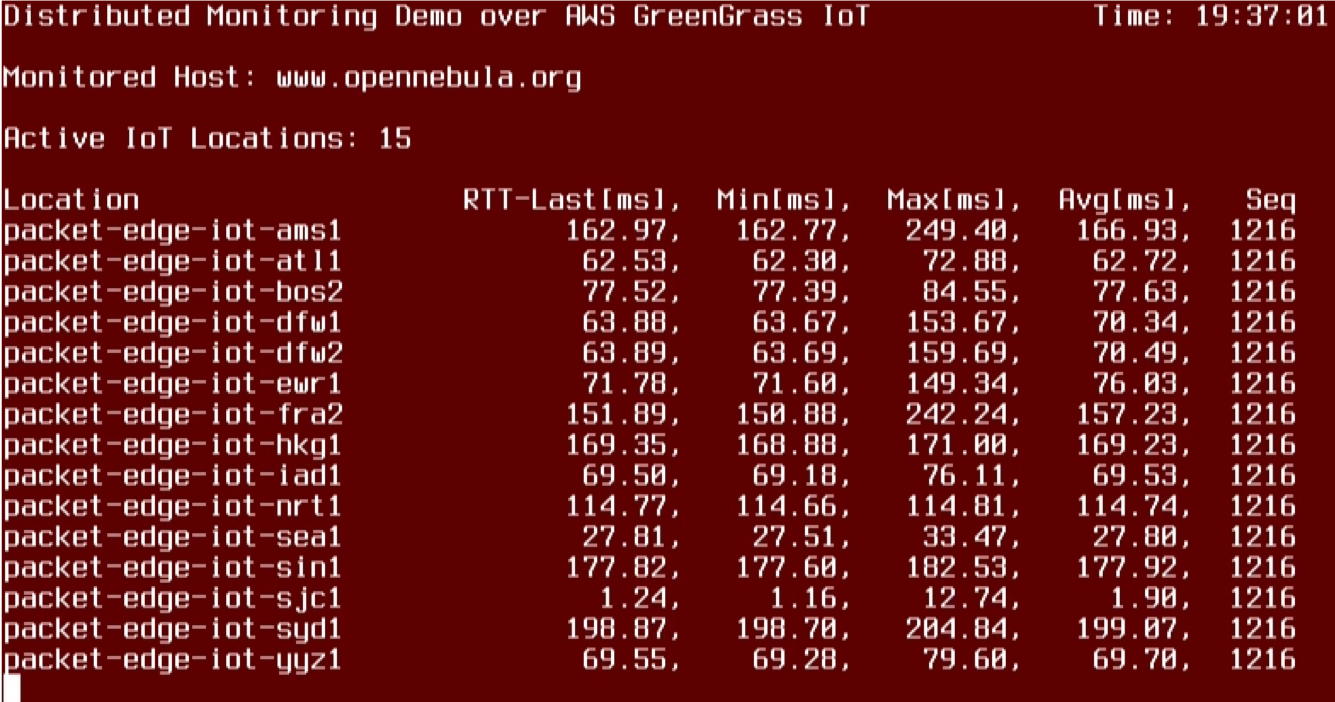

We demonstrated the usability of the demo solution by running our console demo Monitoring Agent mentioned above. We have requested to monitor our website www.opennebula.org and got live latencies measured from different parts of the world in a 1-second interval. Screenshot below captures the state on 2019/07/02 19:37:01.

Based on the values from the screenshot above, we can see the website is performing really excellently with 1ms latencies from the Silicon Valley (SJC1), just fine with 77ms latencies from Boston (BOS2), but the worse latency above 150ms are from Frankfurt (FRA2), Hong Kong (HKG1) or Sydney (SYD1) locations. These values can tell how well the website is performing for customers from these locations, although they aren’t the only factors involved.

Powerful Capability Packaged with Simplicity

For the presented demo model use-case, the distributed infrastructure is crucial to provide the performance metrics from different parts of the world. A simple solution could be done only from a single location, but with limited usability (e.g., customer is running website mainly in Europe, but we measure the site from USA) and prone to incidents in the locality (power outage, network connectivity problems).

The right question here is not why do we need a geographically distributed infrastructure, but how do we build it and how do we design the application running inside. The hardest way is to build a whole solution from scratch, considering all the components you have to select, install, configure, and run (message broker, application server, database, own application).

An easier way is to use a platform providing base application services and focus only on own application logic running inside. One of the solutions to consider is the AWS IoT Greengrass, which can run custom standalone code or AWS Lambda functions on your side, and provides an easy and secure communication between the connected components. The OpenNebula AWS IoT Greengrass appliance will bring a quick and automatic way to join your OpenNebula managed computing resources to the AWS IoT Greengrass cloud service, and to use them to run your own code leveraging the IoT features. On boot, the appliance provides the ready-to-use own Greengrass core or device types.

And the standout figures, proven by this exercise, demonstrate with unmistakable clarity how simple it is to be able to create an AWS IoT Greengrass cloud using OpenNebula with Edge resources distributed globally. If you are looking to utilize AWS IoT Greengrass, the OpenNebula appliance comes packed with everything you need. And with the new innovative OpenNebula edge features, you have the automated capability to take this cloud to the edge with lightning-fast speed and unmatched ease.

For the demo, the pre-release version of the appliance was used. The official release is expected in the following weeks.

0 Comments