Check our step-by-step tutorial and screencast on how to easily deploy on AWS a single-node Firecracker cloud integrated with the Docker Hub marketplace 📘

Application container technologies, like Docker and Kubernetes, are becoming the de facto leading standards for packaging, deploying and managing applications with increased levels of agility and efficiency. Kubernetes is widely used for the orchestration of containers on clusters, offering features for automating application deployment, scaling, and management.

However, Kubernetes doesn’t necessarily work for every single use case nor solves all container management-related challenges an organization might face. Just to put an example, its support for multi-tenancy is actually quite limited, and it cannot guarantee perfectly secure isolation between tenants. The only way to run Kubernetes is by providing different teams with their own clusters. Upgrading clusters and patching vulnerabilities is not a quick and easy task, which in the end requires to build an expensive full-time admin team.

Moreover, Kubernetes does not offer cloud-like self-service provision features for users, nor accounting and advanced authorization features for administrators. Cloud providers and cloud management tools, like Amazon Elastic Kubernetes Service (EKS) or Google Kubernetes Engine (GKE), try to bridge these gaps by offering managed Kubernetes-as-a-Service platforms. What these solutions do is to add an extra control layer that ends up increasing management complexity, resource consumption, and associated costs.

OpenNebula 5.12 “Firework”

The new OpenNebula 5.12 “Firework” brings new exciting features to the container orchestration ecosystem by providing an innovative open source solution for organizations that need to build and manage a secure, self-service, multi-tenant cloud for serverless computing. Users of an OpenNebula cloud can now easily run isolated containers without the need to provision and manage servers or additional control layers, thus allowing them to focus on designing and building their applications instead of managing the underlying infrastructure. OpenNebula’s pioneering approach towards container orchestration integrates two main technologies: AWS Firecracker as the VMM that provisions, manages and orchestrates microVMs, and Docker Hub as the marketplace for application containers from which users can obtain and seamlessly deploy Docker images as microVMs.

AWS Firecracker is an open source technology that makes use of KVM to launch lightweight Virtual Machines—called microVMs—for enhanced security, workload isolation, and resource efficiency. It is widely used by AWS as part of their Fargate and Lambda services. Firecracker opens up a whole new world of possibilities as the foundation for serverless solutions that need to quickly deploy critical applications as containers while keeping them in secure isolation. With the recent integration of Firecracker as a new supported virtualization technology, OpenNebula provides now an innovative solution to the classic dilemma between using containers—lighter but with weaker security—or Virtual Machines—with strong security but high overhead.

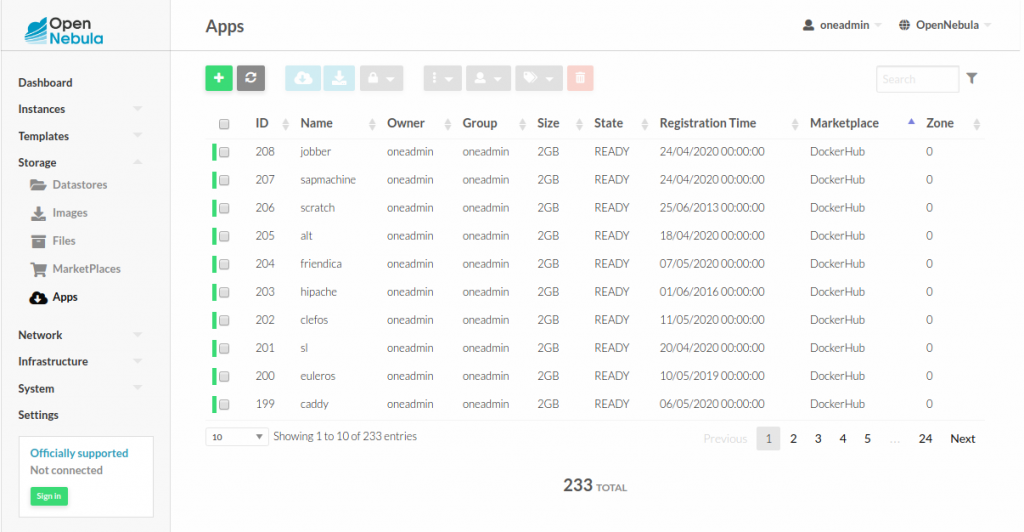

OpenNebula’s integration of Docker Hub as a new native marketplace provides users with immediate access to Docker Hub official images. Through this integration Docker images can be easily imported into an OpenNebula cloud, following a process similar to the way in which the OpenNebula Public Marketplace operates. The OpenNebula context packages are installed during the import process so, once an image is imported, it becomes fully functional (including auto IP configuration, SSH key management and custom scripts). The Docker Hub marketplace also creates a new VM template associated with the imported image. This template can be customized by the user (e.g. adding the desired kernel, tuning specific parameters, etc.).

An Enterprise Cloud for Containerized Applications

OpenNebula brings to the container world a series of unique features to build your own enterprise cloud for serverless computing. Its unique approach speeds up the deployment of containerized applications and multi-tier services within your development workflow. In particular, OpenNebula provides:

- Fast startup times: With OpenNebula you can start containers in seconds by deploying them as Firecracker microVMs, without the need to provision and maintain Dockerized hosts or complex Kubernetes infrastructures.

- Direct access to applications: Deploying a container as a microVM allows your container to directly access your networks through the Firecracker microVM’s IP address. Furthermore, the microVM can be accessed via SSH and VNC by providing interactive modes that help with application development and troubleshooting.

- Hypervisor-level security: OpenNebula guarantees that your containerized application to run within a Firecracker microVM which, in turn, provides VM-grade security and isolation in a multi-tenant environment while preserving the efficiency of lightweight containers.

- Persistent storage: OpenNebula’s datastores can be used as persistent data volumes for containers by attaching them to your Firecracker microVMs. Read-only configuration or data files can be provided by using the OpenNebula file datastore, which can be used by the application within the microVM.

- Network access: Virtual Networks defined within an OpenNebula cloud (i.e. IPv4, IPv6, Dual Stack, Ethernet) can be easily configured so that containerized applications get direct access to the internet without requiring additional components (e.g. ingress controllers, load balancers, etc.). With OpenNebula Virtual Routers it is also possible to connect different virtual networks, allowing applications and Virtual Machines attached to different virtual networks to communicate with each other. It is possible to use security groups for ensuring network security for containers applications.

- Application horizontal scaling: Application deployed as complex, multi-tier services can be scaled up and down “manually” but also (via the OneFlow component) in an automatic manner, based on user-defined metrics and pre-defined criteria. An init script can be defined to send application metrics to OneGate, OpenNebula’s metadata server.

- Complete multi-tenant environments with ACLs, users, groups, resource UNIX-like permissions and VDCs, in which cloud admins can easily adapt OpenNebula to their organization’s infrastructure and DevOps requirements and set up independent sets of resources for specific purposes or groups of users (i.e. development, testing, integration and production).

- Geo-distributed applications: Thanks to its elastic cloud infrastructure feature (OneProvision), OpenNebula allows to build on-demand geo-distributed infrastructures for execution of containerized applications at the Edge.

Summary

Although many IT departments providing container execution services have decided to implement their DevOps requirements on top of Kubernetes, that doesn’t mean that betting only on one horse is the wisest thing to do. Organizations requiring container orchestration capabilities should first of all state what their main objective is, and be careful not to make apples to oranges comparisons or to fall into unexpected costs or vendor lock-ins. Kubernetes is a very complex and demanding technology, and—temporary fashions aside—other technologies may actually be the best solution for some use cases.

Some of the features provided by Kubernetes (such as its declarative model, self healing, automated rollout and rollbacks, secret and configuration management, service discovery and load balancing) make it ideal for companies that need complete container orchestration services for the deployment and management of containerized workflows in a production environment. Yet, these organizations have to be able to cope with high operational costs if what they want is to build and manage a corporate Kubernetes deployment.

OpenNebula, on the other hand, becomes an ideal solution for companies that need to build multi-tenant Container-as-a-Service environments, but with lower operational costs. In this way, users and business units can develop and deploy applications easily and very fast, without their organizations having to manage dockerized hosts or complex orchestration infrastructures such as Kubernetes or OpenShift. With the release of its version 5.12 “Firework”, OpenNebula has become a real alternative for implementing an agile and serverless cloud paradigm for containerized workloads in production environments 🚀

|  | |

| Use Case | Container as a Service | Container Orchestration |

| Purpose | Create a CaaS multi-tenant Enterprise Cloud for containerized applications | Manage a cluster of Linux containers as a single system to accelerate development and simplify operations |

| Use | Deliver shared resources to groups of users for secure execution of their container workloads | Deployment, scaling, and operations of containers across a cluster of hosts of VMs for a single user or group of users |

| Applications | Containerized distributed applications | Containerized distributed applications |

| Access to Applications | Easy SSH, VNC access to the microVM | Container exec bash access. Hard to troubleshoot |

| Orchestration Approach | Imperative | Declarative |

| Application Management | User-driven life-cycle application management (create, delete, stop, resume) | Application rollback and updates, self-healing, service discovery & load balancing |

| Application Scheduling & Resource Optimization | Application microVMs are placed according to resource requirements (CPU and Memory), affinity rules, custom heuristics… | Automatic bin packing is used to place containers based on their resource requirements |

| Network Access | Full support of IPv4, IPv6, Dual Stack, Ethernet networks and security groups | Allocation of IPv4 and IPv6 addresses to Pods and services |

| Persistent Storage | Datastore persistent data block images can be mounted (via start_script) as storage volumes for application | Local, network and public cloud storage systems can be automatically mounted as Pod Volumes |

| Lifespan | Short-term | Long-term |

| Tenancy | Multiple tenants | Single tenant |

| Self-Provision | Existing catalogs like Docker Hub | Existing Catalogs like Helm Hub (for Kubernetes-ready applications) and Docker Hub (for Pod containers) |

| Security | Hypervisor-level security for multi-tenant environments | Container-level isolation (shared kernel) |

0 Comments