When I ask people whether or not they know what Qt is, they have either never heard of it, or know pretty well what it is. And usually the people who know what it is, have used it for software development, or simply built it because some other piece of software needs Qt. For you who don’t know what Qt is, I’d say without quoting Wikipedia or our own web site, that Qt is a software platform with which you can easily create software that after written once, runs on all platforms supported by Qt. Meaning that you could have your calculator app running on an infotainment screen in your car, any desktop operating system, phone, tablet… you name it, but it would always run because of Qt. Honestly, the amount of supported target devices is huge. But to be able to support all these target devices, we at The Qt Company need to ensure that your app actually works everywhere. That’s, where our Continuous Integration (CI) comes in.

We spawned over 4 million virtual machines during 2019, with the intent of testing something perhaps you created. Qt is open source. Anyone can suggest or implement a new feature or fix a bug they might have found. Your commit would then be peer reviewed and thrown in the mouth of the CI. The CI’s job is either to accept the change and merge it into the code base, or reject it when it doesn’t pass the vigorous testing done to it.

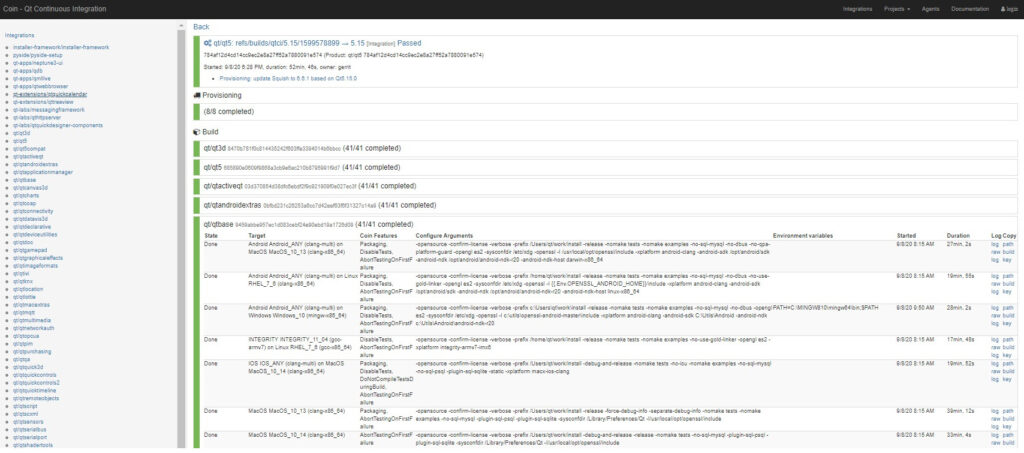

The back bone of our CI is OpenNebula along with Coin. Coin is our own tool which monitors Gerrit for any changes staged for the CI. Coin is quite complex actually and does the work of figuring out what exactly needs to be built or tested. One staged commit in Gerrit causes a cascade of events where Coin requests OpenNebula for dozens of virtual machines at a time followed by yet dozens if not hundreds. One staged commit in Gerrit can in some cases produce over 2000 virtual machines, running various Windows, Linux and macOS distributions. These virtual machines compile the new code both natively and by cross compiling to different target architectures / devices and they also run automated tests.

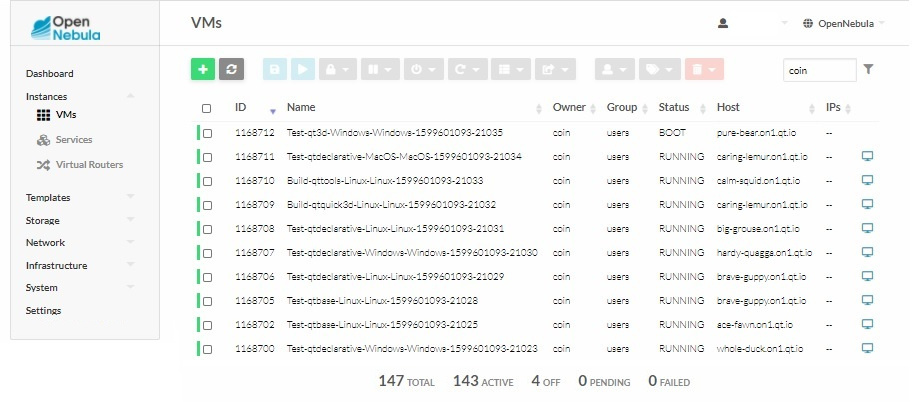

The important task of maintaining the life cycle of all these virtual machines lands in the hands of OpenNebula. OpenNebula monitors the capacity of our infrastructure and queues up all requests it can’t handle yet due to lack of computing capacity on the servers. It keeps a constant eye on the servers watching that they respond and are healthy and takes them offline for maintenance if something goes amiss.

The orchestration of the virtual machines happens on the fly. Coin knows what needs to be built, and in the request for a virtual machine it defines the amount of CPU and RAM we wish to have, all depending on the task at hand. OpenNebula takes care of creating these virtual machines instantly using backing files over the network and not by doing slow deep clones out of the VMs.

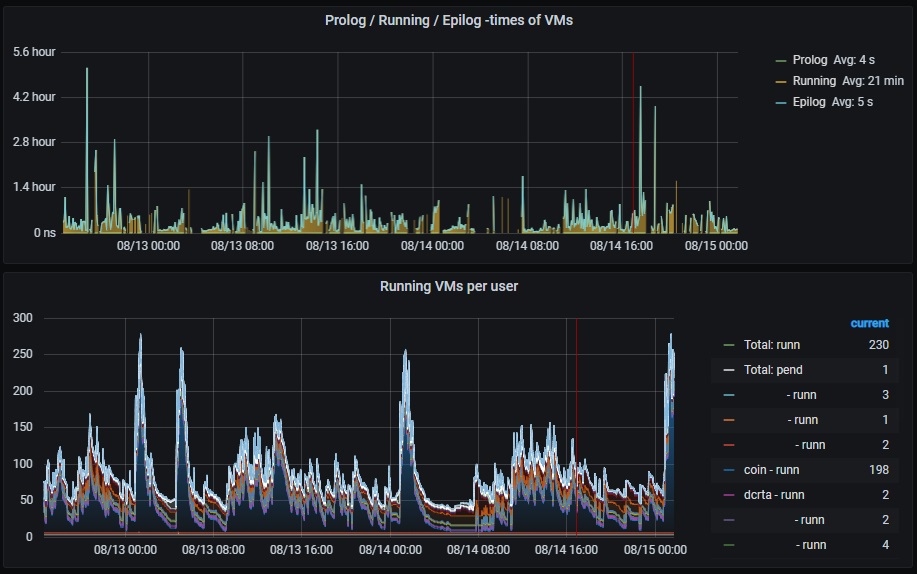

Combined with an SQL database, OpenNebula stores all the data about what it has done. This allows us to collect that data and send it to Grafana for easy tracking. There we can further visualize the loads in different time scales combined with the graphs from the servers as well. And OpenNebula’s support for custom hooks enables us to do various things whenever OpenNebula goes from one state to another with a virtual machine, further allowing us to optimize or track the performance of our CI system.

With the support of VNC within OpenNebula, we can go in to the desktop view and watch the progress within the virtual machine, and possibly start debugging a problem. We can also leave VMs up and running for longer periods of time, if we enable breakpoints in our flow so that in case of a test failure, we stop right there and allow a developer to go in and have a look.

Setting up OpenNebula is or can be very easy. Sometimes the flexibility of a software can be its downfall. If the software is too generic or works by dozens of plugins there’s a risk that the learning curve is too steep. Naturally, the more you can change the behaviour, the more you must also answer questions about how you want it to work. OpenNebula is quite flexible in those areas in which it works, yes, but luckily we have pretty good documentation regarding those other tweaks—at least if you ask me. And the OpenNebula dev team hasn’t thrown in a bunch of plugins that are not related to the core idea that’s behind it.

The documentation actually provides us with the means of self implementing drivers we use, and we even did so when we implemented the support for Parallels. So instead of only running virtual machines via KVM (in Linux), we can also run them in Parallels (in macOS). The code for the drivers isn’t very complex either, so by looking at the existing code you can quite easily figure out what you need to do and whether you might need to start working on your own driver.

The last piece of the puzzle is then how OpenNebula really doesn’t care about how these build servers came to be. We use MAAS to automatically deploy our servers. After the fully deployment process, we have a server online which OpenNebula can connect to. Add the server to the list and it is ready to serve. Ta-da!

I’m eager to see if some day in the near future OpenNebula incorporates Grafana and MAAS as a part of their standard delivery… Although this again starts to sound a bit like the plugin world where everything seems so complex, when all you wanted to do was schedule tasks on hosts, right? Maybe, sometimes, a tool is simply just complete and good enough to perform its original purpose? Wasn’t Winamp actually ready at 2.91? 😉

0 Comments